Hello again Factorians!

The GFX department is back today for covering the process of making graphics for Factorio.

I'll get a bit technical, but not too much in order to don't be absolutely boring.

Next comes a resumed description of the actual process, so if you are a Factorio modder this might be interesting for you.

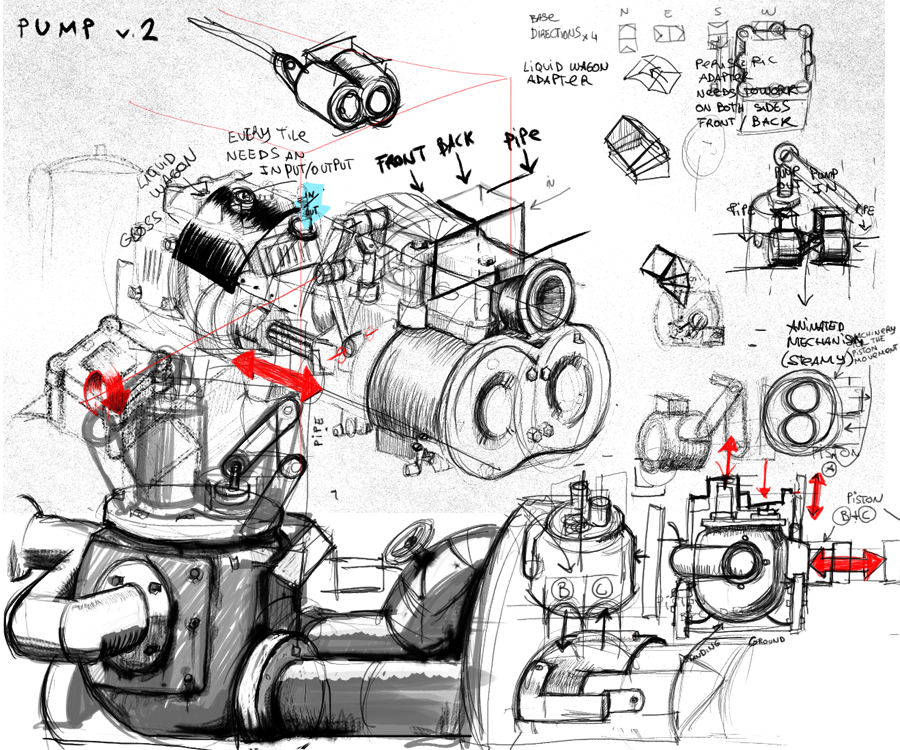

The start of a new entity

The Game designer (kovarex) realizes that Factorio will be better with the addition of a new entity, he speaks with the team about it, most of the time he makes a prototype with a placeholder graphic, he improves the behaviour of the new entity by observing it and playing with it. Once the entity is solid we have a meeting together. We speak about guidelines and I make my contributions concerning visual requirements, colours, animations, different layers, mood, etc.

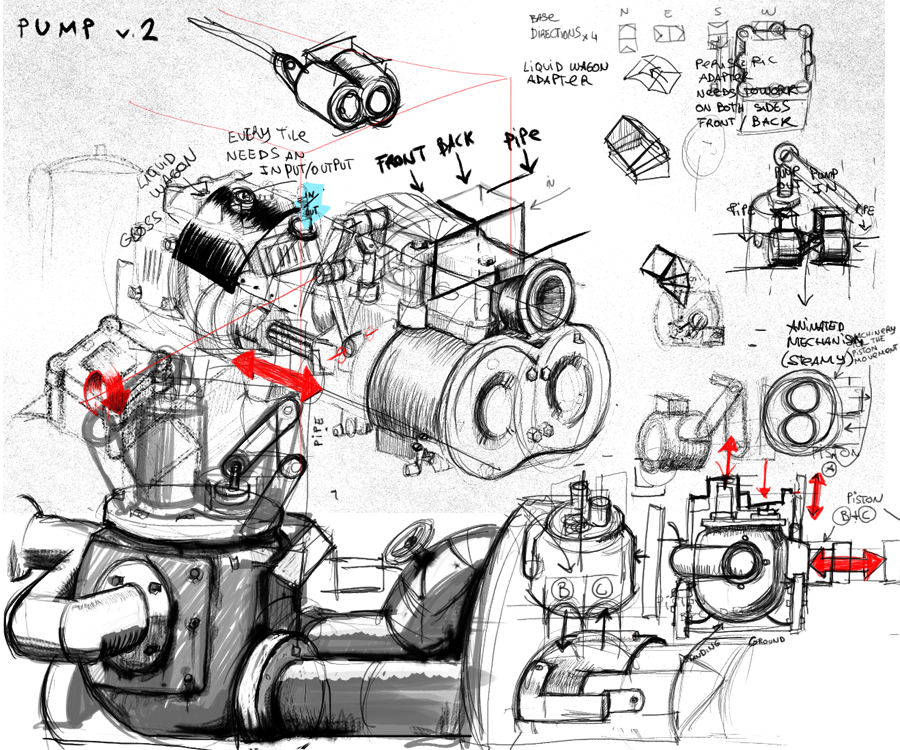

Right now I'm in the middle of this process with the new pumps. See the starting of the sketching for a new entity:

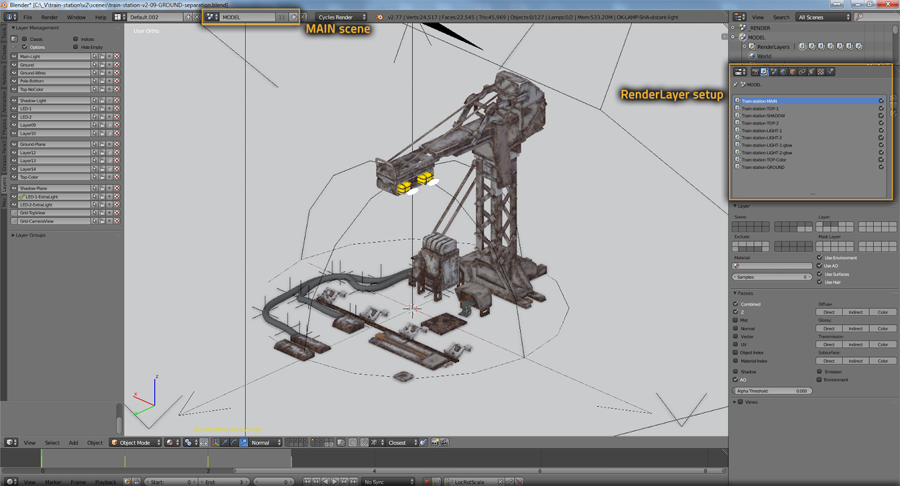

3D modeling with Blender

Once the concept and the technical requirements are clear we jump into Blender for modeling.

Blender is a great software which allows us to have a very flexible workflow, taking care of all our needs for the Factorio engine.

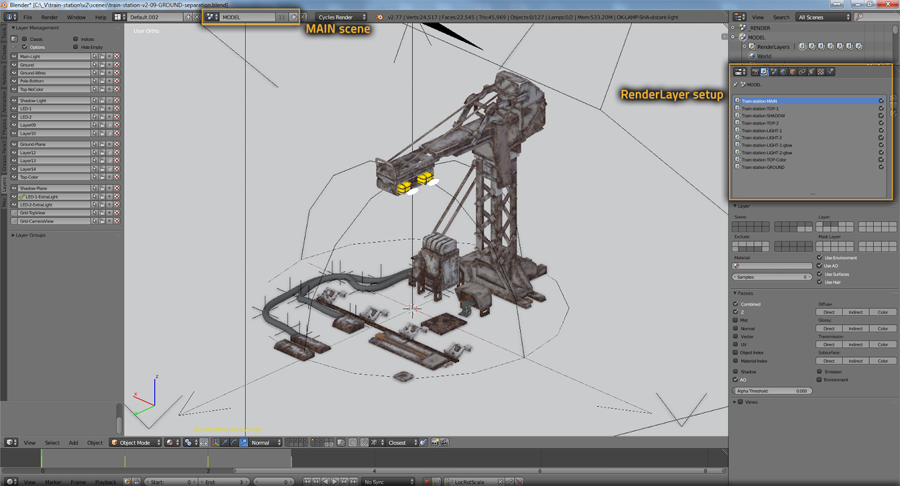

Most of the time, a single entity in Factorio is pretty complex, rendered in multiple layers, and assembled again in the engine in order to work as we want. So for this we'll require multiple layers and outputs in Blender.

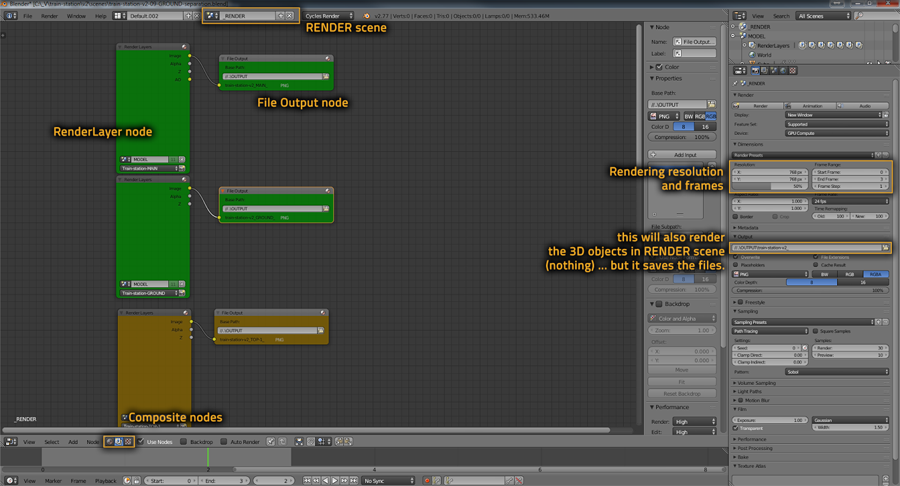

It is very common also that once the renders are finished and all the process is done, the entity is integrated into the game and some changes are necessary, so it's very convenient to have the blend file ready to changes with the minimum effort. In order to be able to re-render by clicking just one button, we need to use RenderLayers, and/or separate scenes, possibly with addition of linking Group instances.

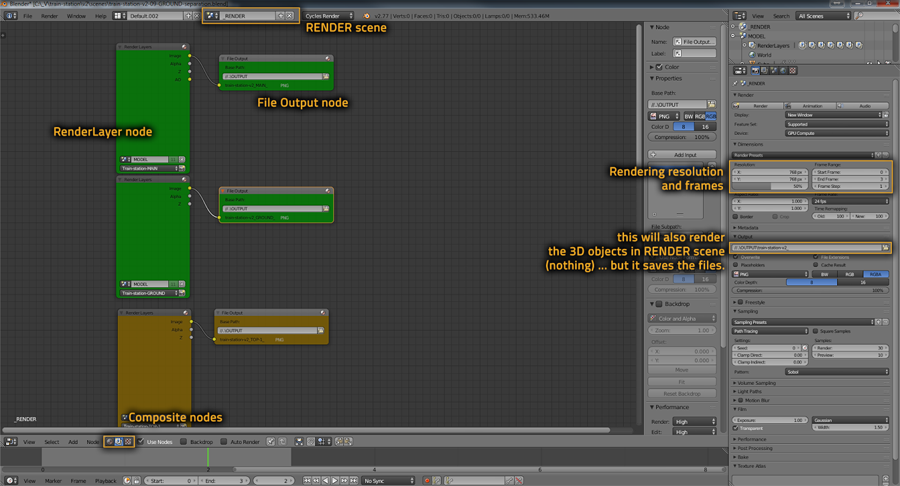

Scenes are always split to MODEL scene(s), and RENDER scene(s). The RENDER scene then links to all necessary scene RenderLayers via compositor nodes.

It is necessary to split the scenes as compositor nodes are activated even when pressing F12, which would overwrite the render outputs accidentally, etc.

Another option is batch rendering through a .bat command, but since that doesn't support RenderLayers, it is not viable for us yet.

Photoshop post-production

Almost every one of our sprites have went through Photoshop at some point, we paint hand-drawn masks to enforce contrast, make edges clearer, define shape of entities better and so on.

In Photoshop, the general approach is that we take the rendered image (preferably by Place Linked function), duplicate it twice, set the duplicates to Multiply and/or Screen blend modes and give them empty(black) masks.

Always is better to have the best render results directly from Blender, but reality is that many times we need a second round of tweaks.

Here an example of the importance of post-production:

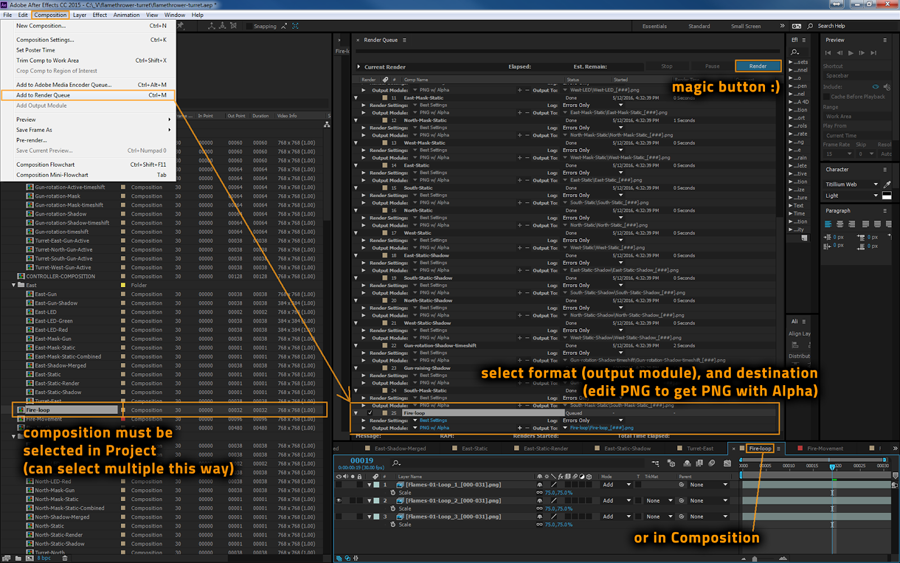

After effects processing

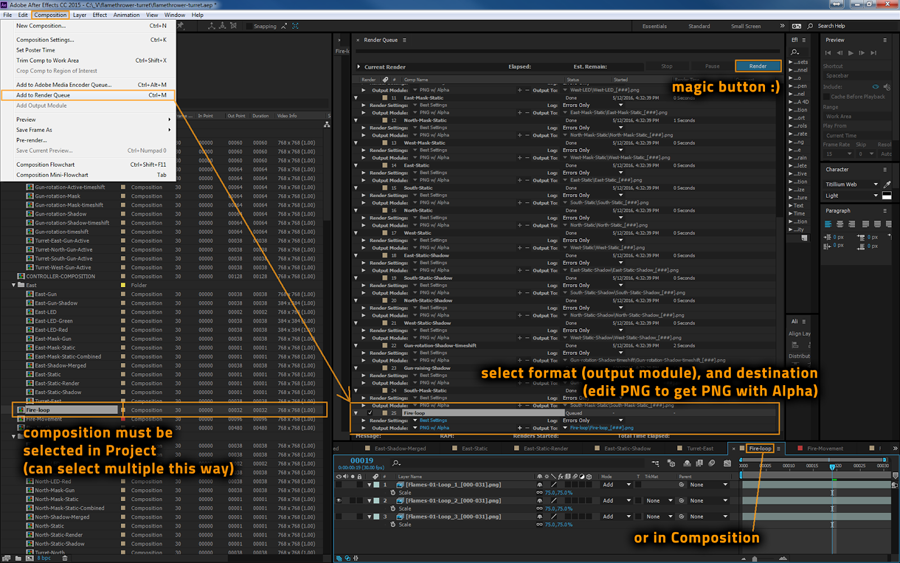

But because projects often get very large, with many output sprites, we need to automate the rendering process, for which we use After Effects. We can combine both the hand-drawn post-production in Photoshop with After Effects by importing the PSD files and/or separate layers from it.

The biggest hurdle in adopting Ae workflow tends to be getting used to the layering system when putting compositions in other compositions.

A good comparison is using Groups in Photoshop. In After Effects this is called pre-composing.

Often you need to pre-compose / create multiple levels of compositions in order to reach desired results. Such a thing is likely to happen when:

You want to treat something like 1 unit - when moving, rotating, scaling, ...

You want to render some things separately.

You want to apply an effect to just some layers below, but not all.

You want to apply additive blending to some layer which should affect something else than just the entity (like fire on flamethrower turret).

You want to use multiple layers as a matte.

You want to render various time regions from the same composition (if you have multiple different outputs in one time sequence like cargo wagon, or when rendering shiftings).

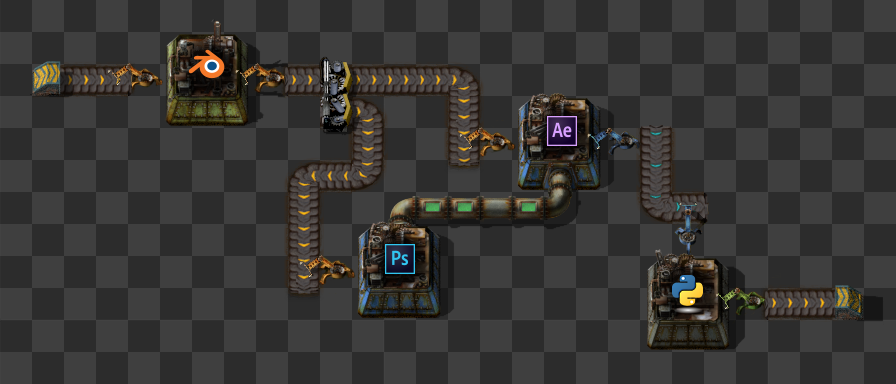

Python, the final step

As the last step of our process, we use a python script spritesheeter.py made by the Factorio coders to create spritesheets ready to the engine with relevant pieces of lua code in text files, alongside with gif previews.

Now we have everything ready to put the new entity into the game and see how it goes, but as I said before it is very probable that something is not exactly as we want and we have to come back to Blender in order to tweak it again. That's the reason of having a flexible system.

I need to say that this entire system is new for us, and now, since we are more artists in the department we had to standardize it. Also big thanks to Vaclav for his hard work on documenting the entire process, and also for his contribution to the system with his knowledge of After Effects which demonstrates that can make the workflow very effective once is setted up. I still working on this part :)

As usual comments and thoughts in

the forums